Cross-cluster search

You can use the cross-cluster search feature in Lucenia to search and analyze data across multiple clusters, enabling you to gain insights from distributed data sources. Cross-cluster search is available by default with the Security plugin, but you need to configure each cluster to allow remote connections from other clusters. This involves setting up remote cluster connections and configuring access permissions.

Table of contents

Authentication flow

The following sequence describes the authentication flow when using cross-cluster search to access a remote cluster from a coordinating cluster. You can have different authentication and authorization configurations on the remote and coordinating clusters, but we recommend using the same settings on both.

- The Security plugin authenticates the user on the coordinating cluster.

- The Security plugin fetches the user's backend roles on the coordinating cluster.

- The call, including the authenticated user, is forwarded to the remote cluster.

- The user's permissions are evaluated on the remote cluster.

Setting permissions

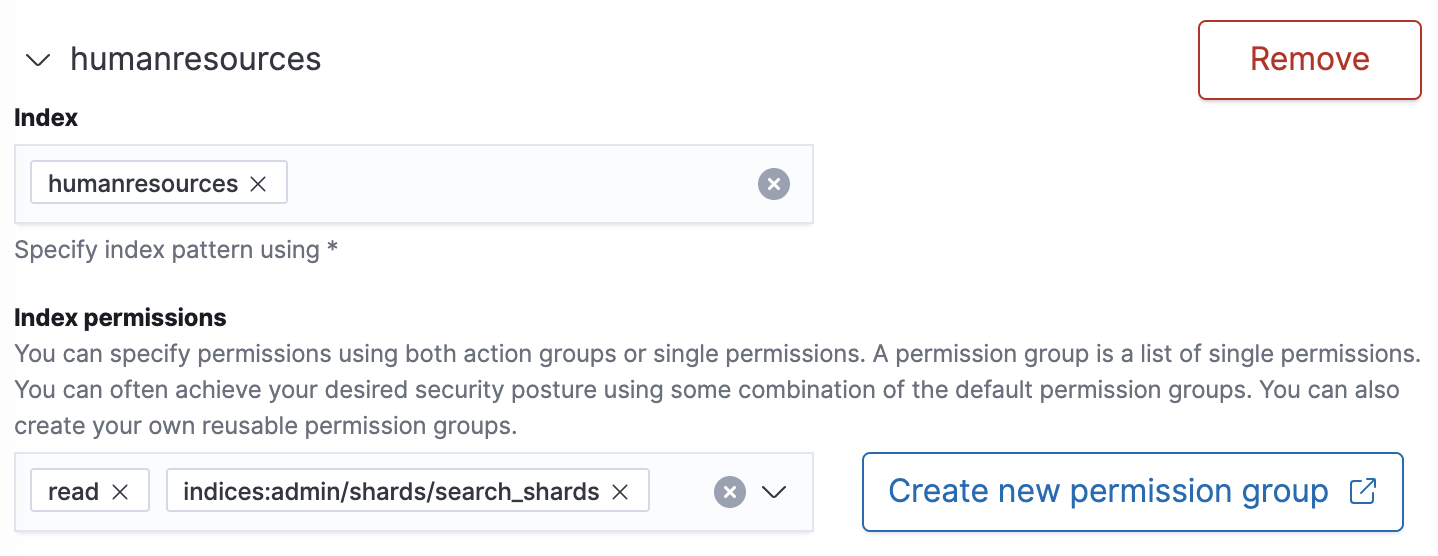

To query indexes on remote clusters, users must have READ or SEARCH permissions. Furthermore, when the search request includes the query parameter ccs_minimize_roundtrips=false---which tells Lucenia not to minimize outgoing and incoming requests to remote clusters---users need to have the following additional index permission:

indices:admin/shards/search_shards

For more information about the ccs_minimize_roundtrips parameter, see the list of URL Parameters for the Search API.

Example roles.yml configuration

humanresources:

cluster:

- CLUSTER_COMPOSITE_OPS_RO

indices:

'humanresources':

'*':

- READ

- indices:admin/shards/search_shards # needed when the search request includes parameter setting 'ccs_minimize_roundtrips=false'.

Example role in OpenSearch Dashboards

Sample Docker setup

To define Docker permissions, save the following sample file as docker-compose.yml and run docker-compose up to start two single-node clusters on the same network:

version: '3'

services:

lucenia-ccs-node1:

image: lucenia/lucenia:0.9.0

container_name: lucenia-ccs-node1

environment:

- cluster.name=lucenia-ccs-cluster1

- discovery.type=single-node

- bootstrap.memory_lock=true # along with the memlock settings below, disables swapping

- "LUCENIA_JAVA_OPTS=-Xms512m -Xmx512m" # minimum and maximum Java heap size, recommend setting both to 50% of system RAM

- "LUCENIA_INITIAL_ADMIN_PASSWORD=<custom-admin-password>" # The initial admin password used by the demo configuration

ulimits:

memlock:

soft: -1

hard: -1

volumes:

- lucenia-data1:/usr/share/lucenia/data

ports:

- 9200:9200

- 9600:9600 # required for Performance Analyzer

networks:

- lucenia-net

lucenia-ccs-node2:

image: lucenia/lucenia:0.9.0

container_name: lucenia-ccs-node2

environment:

- cluster.name=lucenia-ccs-cluster2

- discovery.type=single-node

- bootstrap.memory_lock=true # along with the memlock settings below, disables swapping

- "LUCENIA_JAVA_OPTS=-Xms512m -Xmx512m" # minimum and maximum Java heap size, recommend setting both to 50% of system RAM

- "LUCENIA_INITIAL_ADMIN_PASSWORD=<custom-admin-password>" # The initial admin password used by the demo configuration

ulimits:

memlock:

soft: -1

hard: -1

volumes:

- lucenia-data2:/usr/share/lucenia/data

ports:

- 9250:9200

- 9700:9600 # required for Performance Analyzer

networks:

- lucenia-net

volumes:

lucenia-data1:

lucenia-data2:

networks:

lucenia-net:

After the clusters start, verify the names of each cluster using the following commands:

curl -XGET -u 'admin:<custom-admin-password>' -k 'https://localhost:9200'

{

"cluster_name" : "lucenia-ccs-cluster1",

...

}

curl -XGET -u 'admin:<custom-admin-password>' -k 'https://localhost:9250'

{

"cluster_name" : "lucenia-ccs-cluster2",

...

}

Both clusters run on localhost, so the important identifier is the port number. In this case, use port 9200 (lucenia-ccs-node1) as the remote cluster, and port 9250 (lucenia-ccs-node2) as the coordinating cluster.

To get the IP address for the remote cluster, first identify its container ID:

docker ps

CONTAINER ID IMAGE PORTS NAMES

6fe89ebc5a8e lucenia/lucenia:0.9.0 0.0.0.0:9200->9200/tcp, 0.0.0.0:9600->9600/tcp, 9300/tcp lucenia-ccs-node1

2da08b6c54d8 lucenia/lucenia:0.9.0 9300/tcp, 0.0.0.0:9250->9200/tcp, 0.0.0.0:9700->9600/tcp lucenia-ccs-node2

Then get that container's IP address:

docker inspect --format='{{range .NetworkSettings.Networks}}{{.IPAddress}}{{end}}' 6fe89ebc5a8e

172.31.0.3

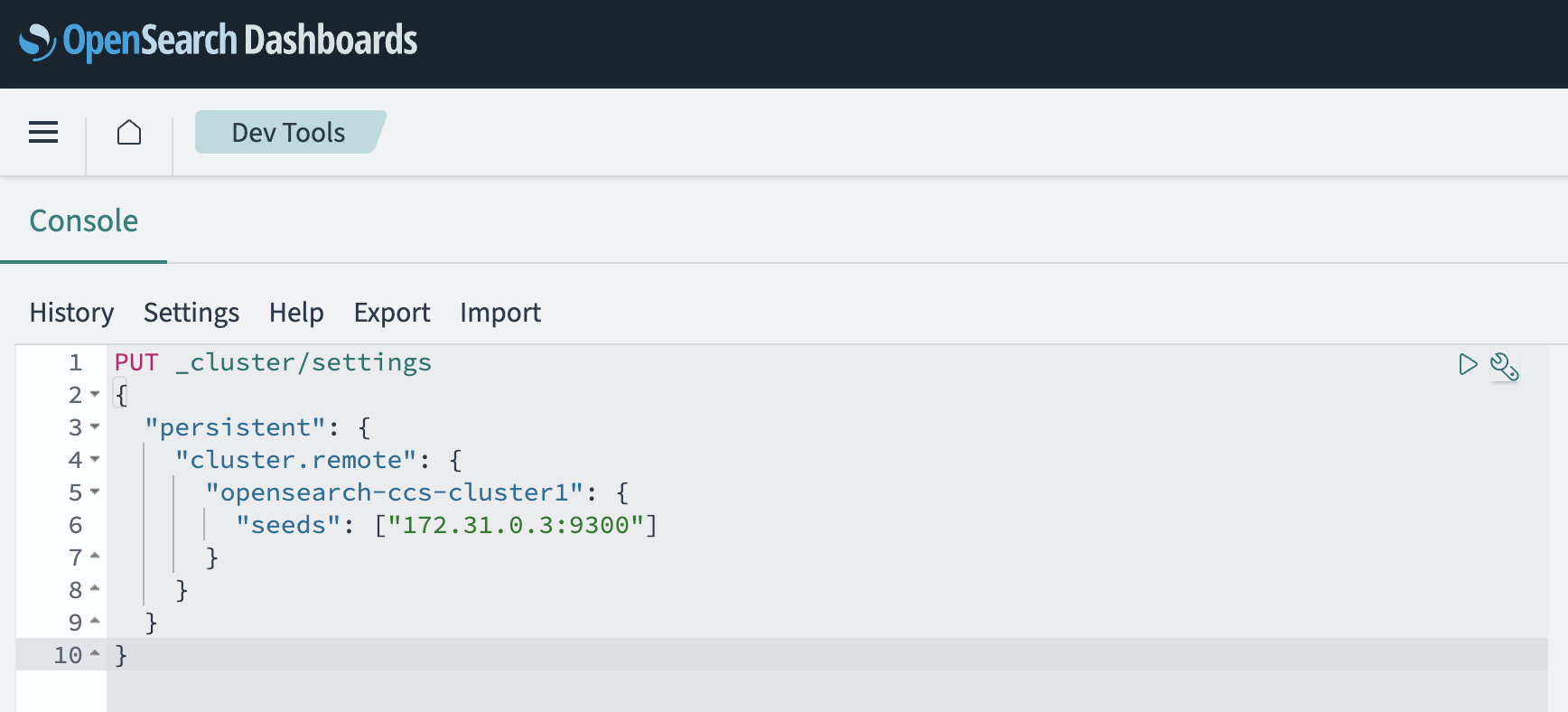

On the coordinating cluster, add the remote cluster name and the IP address (with port 9300) for each "seed node." In this case, you only have one seed node:

curl -k -XPUT -H 'Content-Type: application/json' -u 'admin:<custom-admin-password>' 'https://localhost:9250/_cluster/settings' -d '

{

"persistent": {

"cluster.remote": {

"lucenia-ccs-cluster1": {

"seeds": ["172.31.0.3:9300"]

}

}

}

}'

All of the cURL requests can also be sent using OpenSearch Dashboards Dev Tools.

The following image shows an example of a cURL request using Dev Tools.

On the remote cluster, index a document:

curl -XPUT -k -H 'Content-Type: application/json' -u 'admin:<custom-admin-password>' 'https://localhost:9200/books/_doc/1' -d '{"Dracula": "Bram Stoker"}'

At this point, cross-cluster search works. You can test it using the admin user:

curl -XGET -k -u 'admin:<custom-admin-password>' 'https://localhost:9250/lucenia-ccs-cluster1:books/_search?pretty'

{

...

"hits": [{

"_index": "lucenia-ccs-cluster1:books",

"_id": "1",

"_score": 1.0,

"_source": {

"Dracula": "Bram Stoker"

}

}]

}

To continue testing, create a new user on both clusters:

curl -XPUT -k -u 'admin:<custom-admin-password>' 'https://localhost:9200/_plugins/_security/api/internalusers/booksuser' -H 'Content-Type: application/json' -d '{"password":"password"}'

curl -XPUT -k -u 'admin:<custom-admin-password>' 'https://localhost:9250/_plugins/_security/api/internalusers/booksuser' -H 'Content-Type: application/json' -d '{"password":"password"}'

Then run the same search as before with booksuser:

curl -XGET -k -u booksuser:password 'https://localhost:9250/lucenia-ccs-cluster1:books/_search?pretty'

{

"error" : {

"root_cause" : [

{

"type" : "security_exception",

"reason" : "no permissions for [indices:admin/shards/search_shards, indices:data/read/search] and User [name=booksuser, roles=[], requestedTenant=null]"

}

],

"type" : "security_exception",

"reason" : "no permissions for [indices:admin/shards/search_shards, indices:data/read/search] and User [name=booksuser, roles=[], requestedTenant=null]"

},

"status" : 403

}

Note the permissions error. On the remote cluster, create a role with the appropriate permissions, and map booksuser to that role:

curl -XPUT -k -u 'admin:<custom-admin-password>' -H 'Content-Type: application/json' 'https://localhost:9200/_plugins/_security/api/roles/booksrole' -d '{"index_permissions":[{"index_patterns":["books"],"allowed_actions":["indices:admin/shards/search_shards","indices:data/read/search"]}]}'

curl -XPUT -k -u 'admin:<custom-admin-password>' -H 'Content-Type: application/json' 'https://localhost:9200/_plugins/_security/api/rolesmapping/booksrole' -d '{"users" : ["booksuser"]}'

Both clusters must have the user role, but only the remote cluster needs both the role and mapping. In this case, the coordinating cluster handles authentication (that is, "Does this request include valid user credentials?"), and the remote cluster handles authorization (that is, "Can this user access this data?").

Finally, repeat the search:

curl -XGET -k -u booksuser:password 'https://localhost:9250/lucenia-ccs-cluster1:books/_search?pretty'

{

...

"hits": [{

"_index": "lucenia-ccs-cluster1:books",

"_id": "1",

"_score": 1.0,

"_source": {

"Dracula": "Bram Stoker"

}

}]

}

Sample bare metal/virtual machine setup

If you are running Lucenia on a bare metal server or using a virtual machine, you can run the same commands, specifying the IP (or domain) of the Lucenia cluster. For example, in order to configure a remote cluster for cross-cluster search, find the IP of the remote node or domain of the remote cluster and run the following command:

curl -k -XPUT -H 'Content-Type: application/json' -u 'admin:<custom-admin-password>' 'https://lucenia-domain-1:9200/_cluster/settings' -d '

{

"persistent": {

"cluster.remote": {

"lucenia-ccs-cluster2": {

"seeds": ["lucenia-domain-2:9300"]

}

}

}

}'

It is sufficient to point to only one of the node IPs on the remote cluster because all nodes in the cluster will be queried as part of the node discovery process.

You can now run queries across both clusters:

curl -XGET -k -u 'admin:<custom-admin-password>' 'https://lucenia-domain-1:9200/lucenia-ccs-cluster2:books/_search?pretty'

{

...

"hits": [{

"_index": "lucenia-ccs-cluster2:books",

"_id": "1",

"_score": 1.0,

"_source": {

"Dracula": "Bram Stoker"

}

}]

}

Sample Kubernetes/Helm setup

If you are using Kubernetes clusters to deploy Lucenia, you need to configure the remote cluster using either the LoadBalancer or Ingress. The Kubernetes services created using the following Helm example are of the ClusterIP type and are only accessible from within the cluster; therefore, you must use an externally accessible endpoint:

curl -k -XPUT -H 'Content-Type: application/json' -u 'admin:<custom-admin-password>' 'https://lucenia-domain-1:9200/_cluster/settings' -d '

{

"persistent": {

"cluster.remote": {

"lucenia-ccs-cluster2": {

"seeds": ["ingress:9300"]

}

}

}

}'

Proxy settings

You can configure cross-cluster search on a cluster running behind a proxy. There are many ways to configure a reverse proxy and various proxies to choose from. The following example demonstrates the basic NGINX reverse proxy configuration without TLS termination, though there are many proxies and reverse proxies to choose from. For this example to work, Lucenia must have both transport and HTTP TLS encryption enabled. For more information about configuring TLS encryption, see Configuring TLS certificates.

Prerequisites

To use proxy mode, fulfill the following prerequisites:

- Make sure that the source cluster's nodes are able to connect to the configured

proxy_address. - Make sure that the proxy can route connections to the remote cluster nodes.

Proxy configuration

The following is the basic NGINX configuration for HTTP and transport communication:

stream {

upstream lucenia-transport {

server <lucenia>:9300;

}

upstream lucenia-http {

server <lucenia>:9200;

}

server {

listen 8300;

ssl_certificate /.../0.9.0/config/esnode.pem;

ssl_certificate_key /.../0.9.0/config/esnode-key.pem;

ssl_trusted_certificate /.../0.9.0/config/root-ca.pem;

proxy_pass lucenia-transport;

ssl_preread on;

}

server {

listen 443;

listen [::]:443;

ssl_certificate /.../0.9.0/config/esnode.pem;

ssl_certificate_key /.../0.9.0/config/esnode-key.pem;

ssl_trusted_certificate /.../0.9.0/config/root-ca.pem;

proxy_pass lucenia-http;

ssl_preread on;

}

}

The listening ports for HTTP and transport communication are set to 443 and 8300, respectively.

Lucenia configuration

The remote cluster can be configured to point to the proxy by using the following command:

curl -k -XPUT -H 'Content-Type: application/json' -u 'admin:<custom-admin-password>' 'https://lucenia:9200/_cluster/settings' -d '

{

"persistent": {

"cluster.remote": {

"lucenia-remote-cluster": {

"mode": "proxy",

"proxy_address": "<remote-cluster-proxy>:8300"

}

}

}

}'

Note the previously configured port 8300 in the Proxy configuration section.